Advanced Record Analysis – 2392528000, кфефензу, 8337665238, 18003465538, 665440387

Advanced Record Analysis scrutinizes the identifiers 2392528000, кфефензу, 8337665238, 18003465538, and 665440387 with methodical rigor. The approach cross-validates encoding, provenance, and lineage to separate incidental variance from meaningful anomalies. It centers on data hygiene, traceability, and governance metrics to produce reproducible, evidence-driven conclusions. Ambiguities and potential risks are mapped against documented controls, leaving unresolved questions that justify closer examination and subsequent verification steps.

What Advanced Record Analysis Reveals About Your Data Integrity

Advanced record analysis serves as a diagnostic lens for data integrity, systematically identifying discrepancies that may indicate corruption, loss, or tampering.

The approach catalogs deviations, quantifies risk, and supports controlled remedies.

It highlights data integrity risks and strengthens governance through anomaly detection, enabling informed decisions.

A detached assessment emphasizes reproducibility, traceability, and evidence-driven conclusions for freedom-minded stakeholders.

Decoding the Identifiers: 2392528000, кфефензу, 8337665238, 18003465538, 665440387

The prior examination of data integrity established a framework for interpreting anomalies across identifiers. The siguientes identifiers, 2392528000, кфефензу, 8337665238, 18003465538, and 665440387, are parsed for pattern, encoding, and provenance. Each element undergoes rigorous cross-validation to support data integrity and anomaly detection, separating incidental variance from meaningful signals while preserving an observer’s analytic agency and freedom to question results.

Practical Techniques for Anomaly Detection in Complex Records

Practical techniques for anomaly detection in complex records require a disciplined, data-driven approach that combines statistical rigor with domain awareness. The method emphasizes data lineage, robust anomaly scoring, and metadata hygiene to ensure traceability. Noise filtering isolates genuine signals, improving model clarity. Clear benchmarks and documented assumptions support reproducibility, while concise reporting aids actionable decision-making without overinterpretation.

Building a Robust Analysis Workflow for Ongoing Data Quality

What constitutes a robust analysis workflow for ongoing data quality hinges on repeatable processes, clear ownership, and measurable controls that endure data evolution.

The framework emphasizes data lineage and disciplined feature engineering, enabling traceability and reproducibility.

It integrates continuous validation, versioned data, and governance metrics, supporting transparent decision-making while preserving freedom to adapt methodologies as data landscapes change.

Frequently Asked Questions

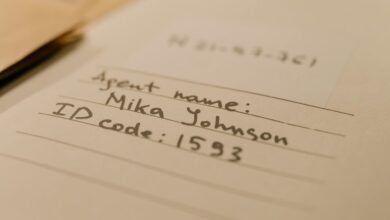

What Are the Origins of the Identifiers Used?

The origins of the identifiers arise from standardized coding and cataloging practices, revealing intentionality behind their assignment. Origins origins point to systematic usage, while identifiers usage reflects hierarchical relationships, metadata needs, and traceable provenance across datasets and archival records.

How Do These Numbers Affect Audit Trails?

In 1990s skepticism, the numbers influence audit trails by anchoring data lineage to immutable identifiers; they enable audit sampling, traceability, and reproducibility, supporting evidence-driven assessments and preserving freedom through transparent, verifiable record integrity.

Can These Codes Imply Regulatory Compliance Issues?

The codes may indicate compliance implications and warrant regulatory mapping under scrutiny; they signal potential gaps in governance, requiring evidence-based review of audit trails to ensure alignment with applicable standards and transparent, freedom-oriented risk assessment.

Are There Privacy Concerns With Sharing Identifiers?

Privacy concerns arise whenever identifiers sharing occurs, as personal traces can be reassembled. The analysis shows potential linkage risks, data minimization gaps, and lawful exposure, warranting strict governance, auditing, and transparent user consent to mitigate exposure.

What Tools Best Visualize Such Mixed Identifiers?

Visualization best practices and data lineage visualization tools enable analysts to map mixed identifiers, revealing relationships while preserving privacy. The approach emphasizes traceability, modular dashboards, and evidence-based evaluation to support an informed, freedom-oriented analytical workflow.

Conclusion

Advanced Record Analysis demonstrates that cross-validating encoding, provenance, and lineage yields actionable signals about data integrity. The examined identifiers reveal that incidental variance can masquerade as anomaly unless contextualized by governance metrics and traceability. The evidence supports a robust workflow: rigorous validation, transparent metadata, and reproducible scoring. By focusing on data hygiene and documented remedies, organizations can distinguish meaningful anomalies from noise, strengthening governance and resilience in evolving data landscapes.