Identifier & Keyword Validation – нщгекфмуд, 3886405305, Ctylgekmc, sweeetbby333, сниукы

Identifier and keyword validation demand a disciplined, methodical approach. This discussion frames criteria for length, character sets, patterns, and language considerations, separating identifier integrity from semantic meaning. The goal is reliable, interoperable inputs across locales and platforms, without sacrificing performance. Edge cases and governance are examined to anticipate real-world constraints. The reader is left with unresolved questions about implementation trade-offs and the best path to scalable validation, inviting careful consideration of techniques and tools to come.

What Is Identifier & Keyword Validation and Why It Matters

Identifier and keyword validation is a systematic process used to confirm that given identifiers and keywords conform to defined formats, character sets, and domain-specific rules. The examination delineates boundaries between valid and invalid inputs, enabling reliable data handling.

Identifier validation targets structural integrity, while Keyword verification assesses semantic relevance. This disciplined approach supports accuracy, security, and interoperability, fostering controlled freedom within standardized, transparent validation practices.

Practical Criteria: Length, Characters, Patterns, and Language Considerations

Practical criteria for identifiers and keywords hinge on concrete, repeatable rules: acceptable length, permitted character sets, recognizable patterns, and language considerations that influence usability and processing.

The analysis emphasizes identifier validation, keyword strategies, and precise constraints.

A 2 element comma separated list of 2 two word discussion ideas about Subtopic not relevant to the Other H2s listed above: naming conventions, validation heuristics.

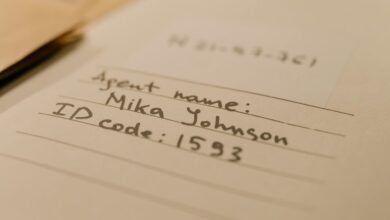

Real-World Challenges and How to Handle Edge Cases (нщгекфмуд, 3886405305, Ctylgekmc, sweeetbby333, сниукы)

Real-world scenarios reveal that edge cases often arise from diverse inputs, platform constraints, and evolving tokenization rules, necessitating a disciplined approach to validation and handling.

The analysis remains methodical, documenting failure modes, prioritizing reliability over speed. Low latency checks are balanced with accuracy, while cross locale handling ensures consistent results, guiding resilient designs and predictable behavior across environments and user contexts.

Implementing Robust Validation: Techniques, Tools, and Performance Tips

Implementing robust validation combines structured technique with appropriate tooling to ensure correctness, performance, and resilience. The approach emphasizes repeatable patterns, modular verifiers, and codified rules, enabling scalable governance without compromising speed. Techniques include layered validation, property testing, and formal checks. Tools integrate CI feedback and profiling. Consider validation performance trade-offs and security considerations to sustain reliability and freedom in design choices.

Frequently Asked Questions

How to Handle Unicode Normalization in Identifiers?

Unicode normalization should be applied consistently to identifiers, with pre- and post-normalized forms treated as equivalent; beware normalization pitfalls, and implement cross-locale keyword handling to prevent collisions or misidentification across locales.

Can Keywords Differ by Locale or Domain?

Yes, keywords can differ by locale or domain. For example, a locale-aware search engine maps terms differently in French versus English. This locale aware mapping and domain specific normalization guide consistent keyword interpretation across contexts.

What Metrics Define Validation Performance Impact?

Validation performance is defined by measured validation latency and observed false positives, influencing throughput, reliability, and user experience; metrics include cap- and tail-latency distributions, precision, recall, false-negative rate, and stability under varying traffic.

Should Identifiers Be Case-Sensitive Across Systems?

Case sensitive identifiers should not be universally enforced across systems; instead, a consistent policy is essential. System designers must address Unicode handling, normalization, and inter-system mapping to preserve interoperability and predictable behavior.

How to Audit Validation Rules Over Time?

“Dial up a lantern.” The auditor tracks validation evolution by logging changes, tagging versioned validations, and monitoring drift; systematic review shows audit rules drift and ensures governance, while preserving freedom to adapt within defined standards and timelines.

Conclusion

In the quiet workshop of validation, inputs stand like tempered steel blades, measured and scrutinized before they gleam. Each rule is a whetstone, every constraint a careful hinge, turning smoothly under inspection. The sieve of length, characters, and language catches stray sparks, while patterns carve out trusted shapes from ambiguity. Edge cases rest like careful anchors, preventing drift. When tools align with method and discipline, reliability becomes a steadfast compass, guiding interoperability through noisy, diverse environments. Symbolically, precision preserves integrity.