Mixed Data Verification – 8006339110, 3146961094, 3522492899, 8043188574, 3607171624

Mixed Data Verification combines automated checks with targeted manual review across the listed sources to ensure data accuracy and traceability. The approach emphasizes provenance, governance roles, and schema harmonization to scale without sacrificing discipline. Cross-source validation techniques align data domains and support reproducible audits through sampling and anomaly tagging. Deviations are documented to guide policy-aligned controls, creating an auditable continuum that invites scrutiny and subsequent refinement as systems evolve.

What Mixed Data Verification Looks Like in Practice

Mixed data verification in practice combines automated checks with targeted manual review to ensure data accuracy across heterogeneous sources. In execution, data governance structures define responsibilities, provenance, and standards, while sampling strategies select representative records for inspection. Analysts document deviations, adjust controls, and confirm alignment with policies. The approach remains deliberate, reproducible, and auditable, balancing speed with rigor to sustain trust and freedom.

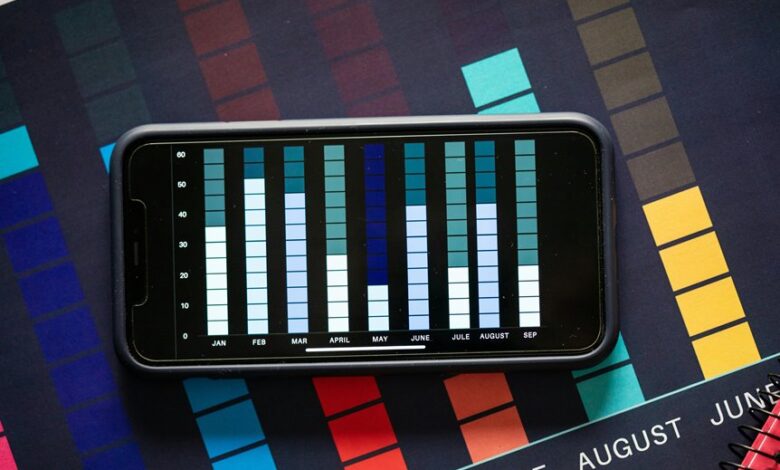

Cross-Source Validation: Techniques That Scale

Cross-source validation scales by combining automated matching, rule-based checks, and scalable sampling to verify data consistency across heterogeneous systems.

Emphasis lies on data provenance and schema harmonization, ensuring traceable origins while aligning structures.

The approach treats cross source validation as an architecture of checks, mappings, and audits, enabling scalable confidence without sacrificing clarity or precision for stakeholders seeking freedom in governance.

Automating Consistency Checks Without Sacrificing Accuracy

Automating consistency checks without sacrificing accuracy requires a disciplined balance between automation intensity and verification rigor. The approach emphasizes reproducible pipelines and deterministic rules, minimizing manual intervention while preserving traceability. Data lineage clarifies data origins and transformations, enabling targeted checks. Attention to error propagation reveals how faults unfold, informing containment and remediation without compromising overall data integrity or analyst freedom.

Handling Anomalies and Auditable Decision Trails

In the realm of data verification, handling anomalies and maintaining auditable decision trails demands a structured approach that preserves both integrity and transparency.

The process establishes data lineage through traceable events and employs anomaly tagging to categorize irregularities, enabling reproducible reviews.

Clear documentation, versioned checkpoints, and independent validation ensure decisions remain auditable, reproducible, and aligned with governance standards.

Frequently Asked Questions

What Is the Purpose of Mixed Data Verification?

The purpose of mixed data verification is to assess data quality and integrity across diverse sources, ensuring trustworthiness. It reinforces data privacy and data provenance by confirming origin, transformations, and consistency, enabling informed freedom through verifiable, transparent datasets.

How Do You Measure Verification Accuracy?

Verification accuracy is measured by comparing results to a trusted reference using predefined thresholds. It employs verification metrics and audit techniques to quantify precision, recall, and error rates, while maintaining transparency for an audience prioritizing freedom.

Which Data Sources Are Most Trustworthy?

Data provenance and data lineage identify the most trustworthy sources; independent audits, transparent methodologies, and enduring traceability confirm credibility, while cross-validation with primary instruments and open metadata enhance reliability for readers seeking freedom in verification.

What Are Common Data Mismatch Causes?

A silent map unfurls in memory, revealing data mismatch as misaligned timestamps, inconsistent formats, and incomplete records. Verification causes include poor data governance, integration errors, legacy systems, and human entry mistakes, systematically undermining trust and accuracy.

How Is Privacy Preserved During Verification?

Privacy is preserved through stringent privacy safeguards, minimizing data exposure, and using anonymization; verification reliability is maintained by cryptographic proofs and auditability, ensuring accurate results without revealing underlying personal details to stakeholders who seek freedom.

Conclusion

In practice, mixed data verification proceeds with careful caution, gently aligning disparate sources through collaborative governance and lucid provenance. The method favors prudent automation, paired with deliberate human review, ensuring consistency without overreach. Anomalies are handled through transparent tagging and reproducible audits, minimizing disruption while clarifying paths for policy refinement. The result remains a steady, well-documented cadence: harmonious validation that respects complexity, preserves traceability, and quietly strengthens trust across diverse data ecosystems.