System Data Inspection – 5052728100, дщщлф, 3792427596, 9405511108435204385541, 5032015664

System Data Inspection integrates automated checks, metadata capture, and governance policies to map data provenance and lineage across multi-domain environments. The approach identifies origin, movement, and transformation markers to enable scalable validation and continuous risk assessment. By modularizing policies and embedding data quality checkpoints, it promotes transparent accountability and regulatory alignment. The framework invites scrutiny of trust and resilience as ecosystems evolve, inviting further examination of how these components interact in practice.

What System Data Inspection Is and Why It Matters

System data inspection refers to the systematic collection, examination, and verification of the data produced by computer systems during operation. The process identifies patterns, anomalies, and trends, clarifying how data flows influence decisions. It highlights insight gaps and informs governance. By evaluating controls and provenance, it supports risk mitigation, ensuring transparency, accountability, and resilient operations within complex digital environments.

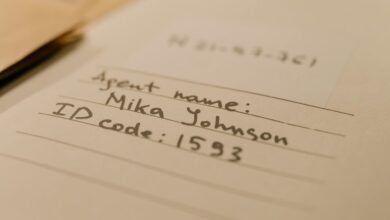

Key Identifiers and What They Signal in Data Flows

Key identifiers in data flows function as diagnostic markers, signaling the origin, movement, and transformation of information across systems. They reveal data provenance, track lineage, and indicate processing stages, enabling correlation and auditing. Clear identifiers support robust access controls, facilitate anomaly detection, and mitigate risk by exposing where data originates, travels, and changes state within multi-domain environments.

Practical Techniques: Automated Inspection, Metadata, and Compliance

Automated inspection, metadata capture, and compliance monitoring operationalize the insights from identifiers by turning manual review into repeatable, scalable processes.

The approach emphasizes data quality checkpoints, continuous risk assessment, and traceable data lineage, enabling auditable decisions.

It strengthens access controls through automated policy enforcement, aligning operational practices with governance requirements while preserving flexibility for innovative data usage across diverse environments.

Building a Scalable, Trusted Data Inspection Program

How can an organization establish a scalable, trusted data inspection program that remains rigorous yet adaptable across evolving data landscapes? The approach unifies data governance, data provenance, and data lineage with ongoing data quality assessment. Governance frameworks standardize controls while provenance enhances auditability. Scalable instrumentation, automated validation, and modular policies ensure consistency, transparency, and resilience amid changing data ecosystems and regulatory expectations.

Frequently Asked Questions

How Does System Data Inspection Handle Encrypted Data?

System data inspection processes encrypted data by preserving confidentiality, using secure keys and access controls; auditing standards demand traceability, non-repudiation, and thorough logging, ensuring verifiability while maintaining data integrity and privacy constraints for freedom-seeking evaluators.

What Are Cost Drivers for Large-Scale Inspections?

Cost drivers include data volume, processing complexity, and audit scope; low risk pilots favor phased deployments, while scalable governance mitigates long-term costs through modular tooling and standardized workflows, enabling efficient resource allocation and repeatable inspections.

Can Inspection Results Be Audited by External Parties?

External audits of inspection results are possible, supported by transparent methodologies and traceable data trails. Such practices enhance auditing potentialities and external legitimacy while preserving analytical rigor, objectivity, and freedom in interpretation for diverse stakeholders.

How Are False Positives Minimized in Automated Checks?

Can false positives be minimized in automated checks through layered validation and calibration? It uses false positives, automated checks, encrypted data, large scale inspections, external audits, staff training, and rigorous tuning for precision, transparency, and ongoing performance monitoring.

What Training Is Needed for Staff to Interpret Findings?

Training interpretation requires structured staff training, combining quantitative criteria with contextual judgment; staff training should emphasize calibration exercises, error awareness, and documentation rigor, ensuring consistent interpretation across teams while preserving autonomy and critical thinking in decision workflows.

Conclusion

System Data Inspection consolidates automated inspection, metadata capture, and governance to illuminate data provenance and lineage across domains. The approach enables continuous risk assessment, auditable decisions, and scalable quality checks, supporting regulatory alignment and resilient governance. A common objection is perceived complexity; however, modular policies and clear provenance markers reduce effort over time, delivering measurable trust, traceability, and accountability as data ecosystems evolve. In sum, disciplined inspection fosters confident, compliant data-driven decisions.